Protecting yourself from the latest scams that use AI technology to impersonate your loved ones

We are dedicated to informing you about the latest cybersecurity threats and helping you protect your personal information. In recent years, scammers have increasingly used artificial intelligence (AI) technology to create sophisticated scams, making them even more challenging to detect. One of the most alarming trends is the use of AI-generated voice scams, which involve impersonating the voices of your loved ones to manipulate you into sending money or revealing sensitive information. In this article, we’ll explore how these scams work and provide tips on protecting yourself from falling victim to them.

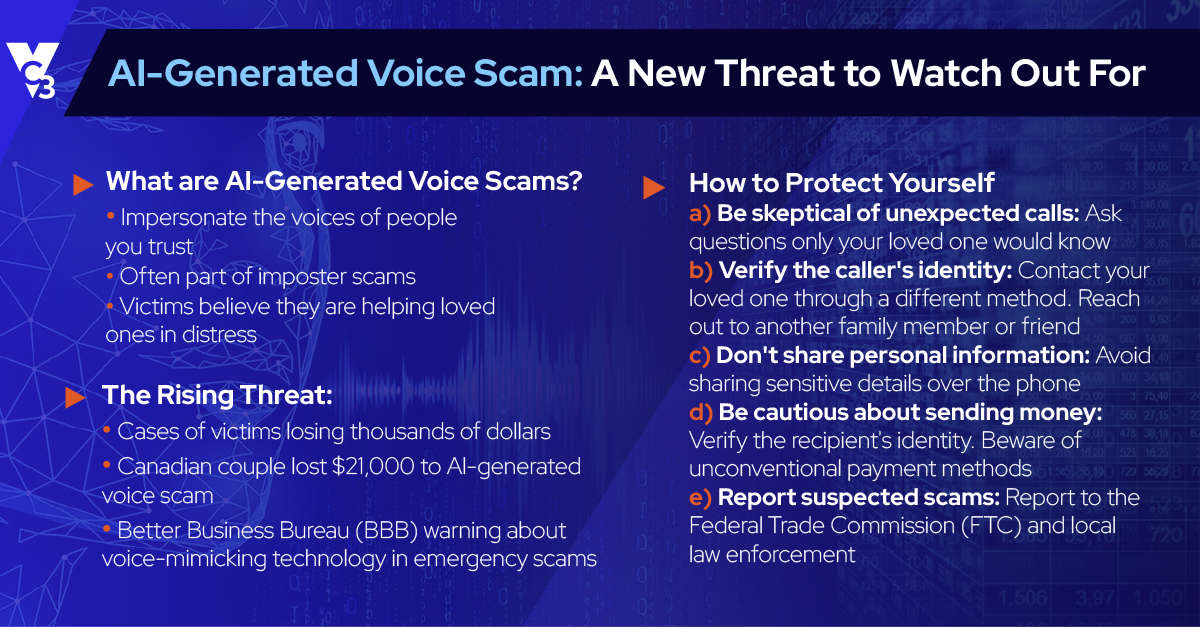

AI-Generated Voice Scams: What You Need to Know

AI-generated voice scams use advanced technology to mimic the voices of people you trust. They are often part of imposter scams, where scammers pretend to be a distressed family member or friend asking for financial assistance. The victims are led to believe they are helping their loved ones during an emergency, making it easier for scammers to exploit their emotions and extract money or personal information.

Recent reports, such as the one published by Ars Technica, detail how these scams are on the rise, with cases of victims losing thousands of dollars to scammers using AI-generated voice technology. In a story shared by Business Insider, a Canadian couple was reportedly scammed out of $21,000 by criminals who used AI to mimic the voice of their adult son. The Better Business Bureau (BBB) has also warned about voice-mimicking technology used in emergency scams.

How to Protect Yourself from AI-Generated Voice Scams

- Be skeptical of unexpected calls: Be cautious if you receive an unexpected call from a loved one claiming to be in distress. Scammers may try to create a sense of urgency, making it difficult for you to think clearly. Don’t be afraid to ask questions only your loved one would know the answer to.

- Verify the caller’s identity: Before providing assistance, try contacting your loved one through a different method, such as texting or calling them directly. Alternatively, you can contact another family member or friend to confirm if your loved one is in trouble.

- Don’t share personal information: Never share sensitive information, such as Social Security numbers or bank account details, with anyone over the phone, especially if the call is unsolicited.

- Be cautious about sending money: Scammers often ask for money to be sent through unconventional methods, such as wire transfers, prepaid cards, or cryptocurrencies. Be cautious about sending money in these situations, and always verify the recipient’s identity.

- Report suspected scams: If you suspect you’ve encountered an AI-generated voice scam, report it to the Federal Trade Commission (FTC) at ReportFraud.ftc.gov and your local law enforcement. By reporting scams, you can help authorities track and combat these criminals.

Conclusion

AI-generated voice scams are a growing threat, and staying informed about this new type of fraud is essential to protect yourself and your loved ones.

Following the tips outlined above can minimize the risk of falling victim to these scams. At VC3, we’re committed to helping you stay safe in the digital world. Subscribe to our blog for the latest information on cybersecurity threats and tips on protecting yourself.